Managing Kubernetes clusters can become an overwhelming challenge. But finding the right Kubernetes monitoring tool can help you manage and optimize your system. Read on for some best practices to enhance your Kubernetes performance.

What is Kubernetes? Here are the basics.

For some background, see What is Kubernetes and how should I monitor it? Basically, Kubernetes is an orchestration system that schedules, scales, and maintains the containers that make up the infrastructure of any modern application. With Kubernetes, you can automate health checks, automate operations, and abstract infrastructure.

To monitor a large number of short-lived containers, Kubernetes has built-in tools and APIs that help you understand the performance of your applications. For visibility into the performance of your entire application, you need a monitoring strategy that takes advantage of Kubernetes, even if containers running your applications are continuously moving between hosts or being scaled up and down.

What metrics should you monitor in Kubernetes?

There are four components that you need to monitor in a Kubernetes environment:

- Infrastructure (*worker nodes)

- Containers

- Applications

- The Kubernetes cluster (*control plane)

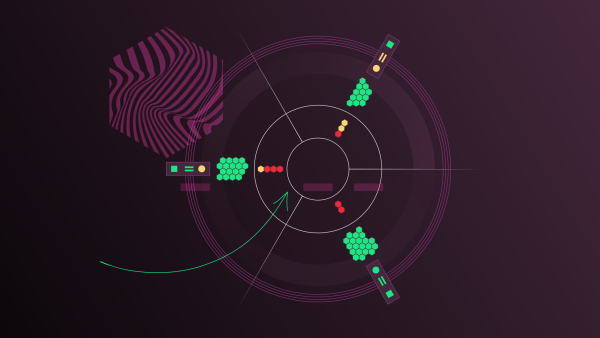

For example, you’ll want to monitor the following metrics in your Kubernetes application, as shown in the example dashboard from New Relic.

- Resources used

- Namespaces per cluster

- Container CPU usage, percentage used vs limit

- Container CPU cores used

- Number of Kubernetes objects

- Pods by namespace

- Container memory usage, percentage used vs limit

- Container MB of memory used

- Container restarts

- Volume usage, percentage used

- Missing pods by deployment

Best practices to improve Kubernetes performance

To enhance Kubernetes performance, focus on defining resource limits, using optimized and lightweight container images, and deploying clusters closer to your users.

Define resource limits

Kubernetes orchestrates containers at scale with the Kubernetes scheduler, which assigns pods in nodes, or virtual machines. The scheduler determines which nodes are valid placements for each pod in the scheduling queue according to constraints and available resources. If you don’t specify resource limits for your containers, the Kubernetes scheduler will assign any pods randomly to a node. This is not ideal, especially if various parts of your application require different amounts of resources to run.

If the Kubernetes scheduler assigns a pod to a node without enough memory, the node could die of memory starvation, affecting cluster stability. If the Kubernetes scheduler assigns a pod to a node without enough CPU, all of the applications running on that node could get slower because all applications on the same node must share a limited amount of CPU. When a node lacks resources, it starts the eviction process and terminates pods, starting with pods without resource requests.

So, if you have performance problems without requests and limits, your pods are evicted. If you don’t suffer performance problems in this situation, most likely you are overprovisioning. That means you are wasting money on the resources that aren’t being used.

By default, Kubernetes doesn’t include tooling to view pod and node-level metrics over time. Engineering teams often allocate too much and are left with unused resources. This brings peace of mind, but with added cost and likely waste.

Gathering the right data is an important first step in setting good resource limits. For example, in New Relic, you can view CPU and memory for every pod in the Kubernetes cluster explorer.

Use optimized, lightweight container images

Within Kubernetes, container images are the primary way to define applications referenced by pods and other objects. With optimized images, Kubernetes can more quickly pull them from the registry and deploy applications to the cloud. With a smaller payload, lightweight Docker images are able to more quickly adjust to changes to Kubernetes configurations.

Here are some strategies to optimize your container images for Kubernetes:

- Have a single, well-defined purpose. It’sIt is important to try to keep container images as simple and easy to understand as possible. When troubleshooting, being able to directly view configurations and logs, or test container behavior, can help you reach a resolution faster.

- Be lightweight. Smaller container images make it simpler to debug problems by minimizing the amount of software involved.

- Have endpoints for readiness and health checks. Beyond conforming to the container model, it’s important to understand and reconcile with the tooling that Kubernetes provides. For example, providing endpoints for liveness and readiness checks or adjusting operations based on changes in the configuration or environment can help your applications use the Kubernetes’ dynamic deployment environment to the best advantage.

- Employ multi-step builds to reduce the overall size of your compiled application. A multistage Docker build process makes it possible to build out an application and then remove any unnecessary development tools from the container. This approach reduces the container's final size.

Deploy Kubernetes clusters closer to your users

The geographic location of the cluster nodes that Kubernetes manages is closely related to the latency that clients experience.

Locating Kubernetes nodes near your customers can enhance the user experience. Cloud providers usually offer several geographic zones, allowing systems operators to deploy Kubernetes clusters in different global locations. For example, nodes that host pods located in Europe will have faster DNS resolve times and lower latencies for customers in that region.

Yet, before you spawn Kubernetes clusters here and there, you need to devise a careful plan for handling multi-zone Kubernetes clusters. Each Kubernetes provider has limitations on which zones can be used for the best failure tolerations. For example, Amazon Kubernetes Service can use this list of zones, while Google Kubernetes Engine offers options for multi-zonal or regional-clusters, each with its own list of pros and cons.

In partnership with Verizon’s 5G network, you can even run Kubernetes clusters on the network edge with Amazon Elastic Kubernetes Service (AWS EKS), which provides huge improvements to latency to your application users.

Some organizations deploy locally and then adjust their deployment strategy according to customer feedback. For this approach to work, you need to implement a monitoring system to identify latency issues before they impact your application’s users.

Monitoring Kubernetes performance to improve applications

If you want to get observability data from your cloud native environment, but you don’t want to instrument all of your Kubernetes clusters manually, look for a solution that gives you dashboards with key metrics and customized alerts.

If you’re ready to try New Relic, read our Monitoring Kubernetes blog series and try our pre-built dashboard and set of alerts for Kubernetes. It includes:

- A golden signals dashboard showing latency, errors, and throughput

- A dashboard tab with HTTP calls from your entire application that you can easily query

- Customizable alerts for various application metrics

When you sign up for a free New Relic account, you get 100GB of free data per month, one free user with full access to all of New Relic, unlimited free basic users who are able to view your reporting, and unlimited dashboards, alerts, and queries.

The views expressed on this blog are those of the author and do not necessarily reflect the views of New Relic. Any solutions offered by the author are environment-specific and not part of the commercial solutions or support offered by New Relic. Please join us exclusively at the Explorers Hub (discuss.newrelic.com) for questions and support related to this blog post. This blog may contain links to content on third-party sites. By providing such links, New Relic does not adopt, guarantee, approve or endorse the information, views or products available on such sites.